Google is tightening the rules — and most sites are not ready

A client called us a few weeks ago, in a panic. Their organic traffic had dropped 30% in two months. No manual penalty. No duplicate content. Just a misconfigured robots.txt file and product listings that were invisible in Google Shopping.

The result: hundreds of pages crawled uselessly, and their real product pages ignored by Google.

We’re seeing this scenario more and more often. Google is no longer forgiving about technical precision. Crawl rules are evolving, structured data requirements are tightening, and a new report in Google Search Console has just changed the game for e-commerce businesses.

The question is no longer “is Google indexing me?” but “is Google indexing what I want, the way I want it?”

Robots.txt: what Google is changing — and why it really matters

The robots.txt file is your gatekeeper. You tell Googlebot what it can crawl and what it cannot. Simple in theory. Complex in practice.

Google has announced changes to the interpretation of robots.txt directives. The goal: more precision, less room for ambiguity. Concretely, some directives that were “tolerated” even when poorly written will now be interpreted more strictly.

What this means for your site:

Contradictory directives will cause problems. If you have a Disallow: / followed by an Allow: /blog/ in the same block, Google will now apply a more rigorous priority logic. A poorly ordered rule can block entire sections of your site.

Misused wildcards will be costly. Patterns like Disallow: /*? (blocking all URLs with parameters) are common. But if your e-commerce filter URLs use legitimate parameters for important pages, you’ve just removed them from Google’s radar.

The legacy of “default” configurations is becoming risky. Many sites run with a robots.txt automatically generated by WordPress, PrestaShop, or a theme 5 years ago. Nobody has touched it since. These files often contain residual blocks that no longer serve any purpose.

After 15 years of technical audits, the observation is always the same: robots.txt is the most neglected file on websites. And it’s often where unexplained traffic losses are hiding. To go further on what Google actually reads when it visits your site, read our article on what Google really sees during its exploration.

How to audit your robots.txt now

No complex tool needed to get started. Google Search Console includes a robots.txt tester in the “Inspection Tools” section. You enter a URL and it tells you whether it’s blocked or not.

What you should check as a priority:

- Are your product and category pages crawlable?

- Are your sorting/filter parameter URLs handled correctly?

- Are your CSS and JavaScript resources accessible? (Google needs to read them to understand your design)

- Is your sitemap declared at the end of the file?

An honest audit takes 2 hours on a standard site. On an e-commerce site with 5,000 references, allow a full day.

The new “Product Listings” report in Google Search Console: what it changes for e-commerce businesses

This is the real novelty that should make every online store owner sit up and take notice.

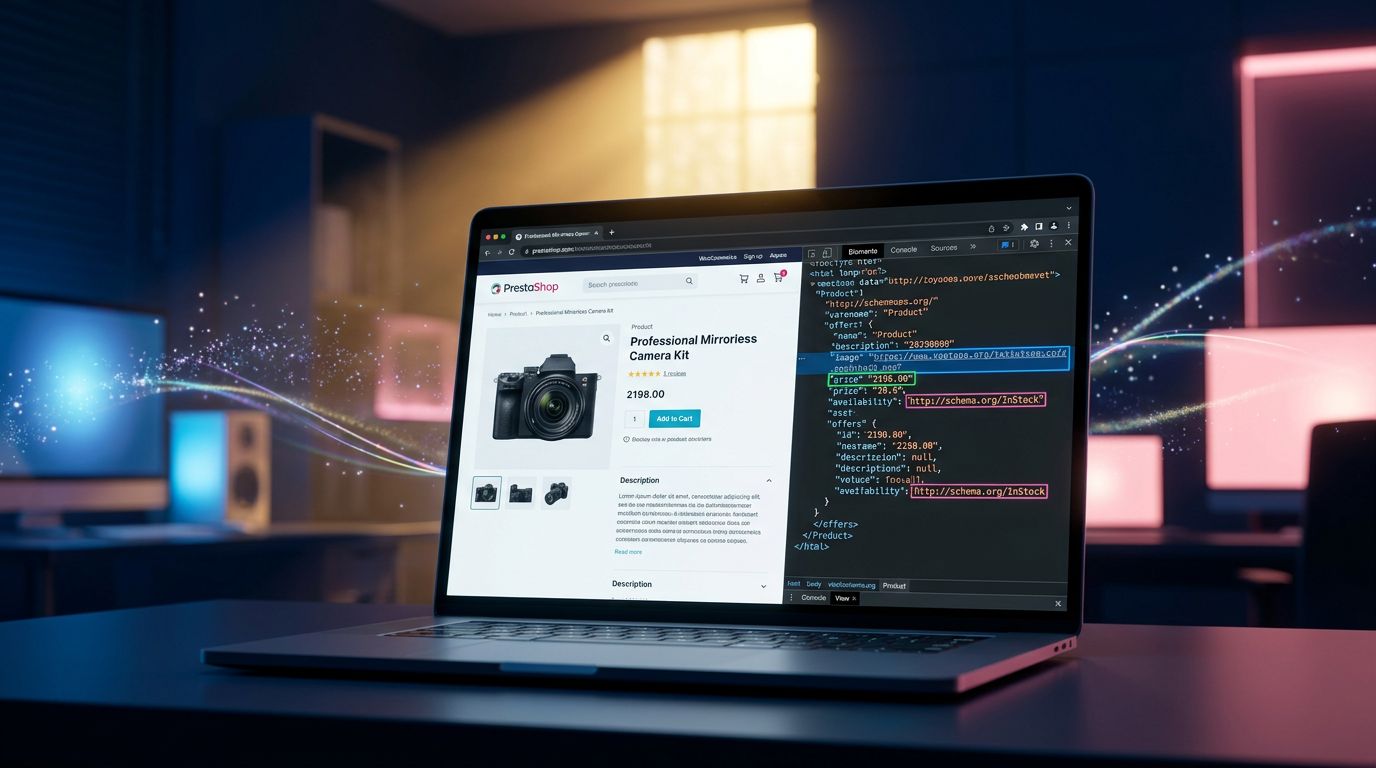

Google has deployed a dedicated report for Merchant Listings — the rich product listings that appear in Google Shopping, search results with price and availability, and product knowledge panels. This report is now directly accessible in Search Console, with an unprecedented level of detail.

“Structured data is the language you use to speak directly to Google. If your grammar is bad, Google doesn’t understand — and your products don’t appear.”

Concretely, this report gives you:

The actual coverage of your product listings. How many of your products are eligible for rich display? How many are actually displayed? The gap between the two is your immediate margin for improvement.

Structured data errors by type. Missing price, availability not set, image not compliant with Google standards — each error is categorised. You know exactly what to fix, in what order.

The distinction between blocking errors and warnings. A blocking error = your listing doesn’t appear. A warning = it appears, but under-optimised. The nuance is important for prioritising your actions.

The most common errors we see on online stores

On the e-commerce sites we audit regularly, three problems come up systematically:

1. The price is missing or poorly formatted

Google requires a price with explicit currency in Schema.org markup. "price": "29.90" without "priceCurrency": "EUR"? Invalid listing. It’s a silly mistake, but it easily affects 20 to 30% of products on quickly built stores.

2. Availability never updated

The availability field in Schema.org must reflect the actual stock status. A product marked InStock when it’s been out of stock for 3 weeks? Google detects it, downgrades your listing, and can penalise the overall trust granted to your domain.

3. Non-compliant images

Google Shopping has precise requirements: main image on a white or neutral background, minimum 250x250 pixels, no promotional text overlaid. Many stores use their “marketing” images with a “PROMO -20%” banner — and end up with blocked listings without understanding why.

Structured data: the technical investment that really pays off

This is where it gets interesting.

Structured data is not a technical detail reserved for large retailers. It’s the most under-exploited lever for e-commerce SMEs. And the Merchant Listings report in Search Console has just made this investment much more measurable.

Before this report, you had a vague idea of whether your products appeared in Google Shopping. Now, you have data product by product, error by error. It’s the difference between “I think my markup is correct” and “Google tells me exactly what’s wrong with 47 of my 312 products”.

What we’ve observed on recent projects: fixing structured data errors on a medium-sized PrestaShop store generates on average 15 to 25% additional impressions in rich results within 6 to 8 weeks. No magic — just technical precision. These optimisations are part of a global SEO strategy we implement for our clients.

“SEO in 2026 is 40% content and 60% technical precision. Most agencies sell content and cut corners on the technical side.”

Correctly implementing Product Schema.org markup with all required fields (name, description, price, currency, availability, image, brand, GTIN if available) takes between 1 and 3 days depending on the complexity of your catalogue and platform. On PrestaShop, modules exist. On WooCommerce too. But their default configuration is rarely optimal — you need to check and adjust them.

What this concretely means for your SEO strategy

These two developments — the increased rigour around robots.txt and the new Merchant Listings report — point in the same direction.

Google wants precision. Not approximation.

The sites that will benefit are those that treat technical SEO as an ongoing project, not a box to tick once a year. Here’s what this concretely means:

A robots.txt audit at minimum once a year, and at every redesign or migration. That’s 2 hours of work that can prevent months of traffic loss.

Monthly monitoring of the Merchant Listings report if you have an online store. Errors accumulate progressively — a new product poorly configured, a plugin update that breaks the markup, a stock change not reflected. A regular check is enough to fix them before they impact your visibility.

Schema.org markup maintained, not just installed. The classic mistake: you install a structured data plugin, validate it once in the Google Rich Results Test, and never touch it again. Except that the catalogue evolves, prices change, stock moves. The markup must follow.

Coordination between your technical team and your marketing team. How many times do we see product campaigns launched without anyone having checked whether the corresponding listings are correctly marked up? The Merchant Listings report must become a shared tool, not an isolated technical report. For a complete view of the SEO foundations to master, our technical SEO guide details every layer to audit.

3 actions to implement this week

No endless list. Three concrete actions, in order of priority:

Action 1 — Test your robots.txt now. Go to Google Search Console > Settings > Robots.txt. Test your 10 most important URLs. If any of them is blocked without valid reason, it’s urgent.

Action 2 — Open the Merchant Listings report if you have an online store. Search Console > Experiences > Product Listings. Look at your coverage rate and the list of blocking errors. If you haven’t yet activated product structured data tracking, now is the time.

Action 3 — Validate your Schema.org markup on 5 representative product pages. Use Google’s Rich Results Test tool. Five minutes per page. You’ll know exactly where you stand.

Conclusion: technical SEO is no longer optional

E-commerce SMEs that will stand out in 2026 will not necessarily be those with the biggest advertising budget. They will be the ones whose site is technically impeccable — because Google gives them access to rich display formats that their competitors miss through negligence.

Robots.txt, structured data, the Merchant Listings report: these are not glamorous subjects. But they are measurable, actionable levers with a documentable ROI.

At GDM-Pixel, this is exactly the type of audit we carry out before touching anything else on a site. Because we’ve learned from experience that a beautiful but technically misconfigured site is a sleeping investment.

Want to know where your site stands on these points? We do a technical diagnosis in 48 hours. No unreadable 80-page report — a prioritised action list with the estimated impact on your visibility. Contact us and we’ll look at it together.